It’s time for an update on Firefox JS performance. This post will take a look at some benchmark scores, and then dive deeper into what those benchmarks actually measure.

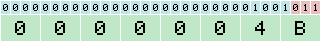

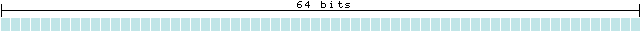

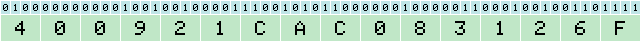

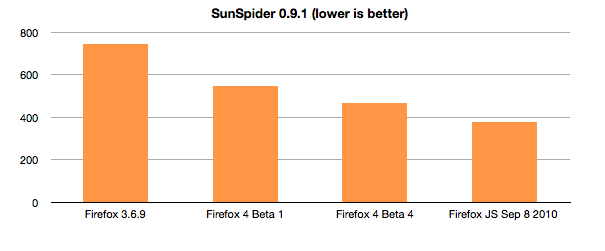

Now that JaegerMonkey is available, it’s time for an update on some familiar benchmarks. Contributors to the Mozilla JS engine are making performance improvements throughout the Firefox 4 development cycle, and the progress has been pretty rapid. I ran a bunch of modern browsers on a Lenovo Thinkpad X201s running Windows 7. Here’s how Firefox has progressed on the the SunSpider benchmark:

As you can see, Firefox is making rapid progress here. The chart below shows us relative to the competition. The gap here is much narrower than it used to be, and we have more improvements coming.

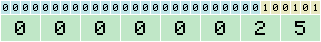

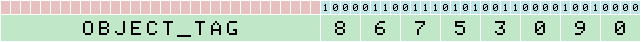

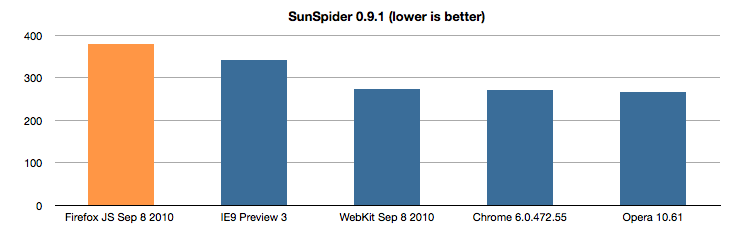

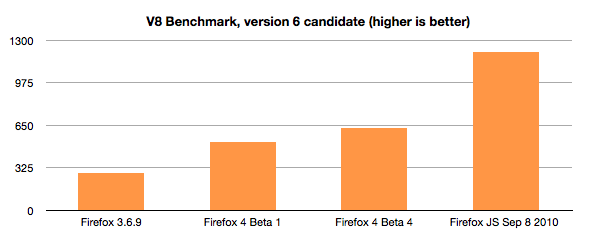

On the v8 benchmark from Google, Firefox’s score is improving more dramatically.

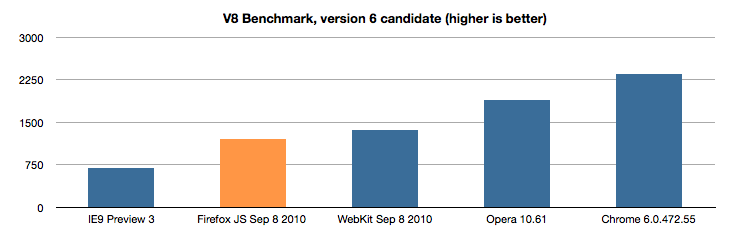

Here’s our V8 score relative to other modern browsers:

So, that’s where things stand right now on a couple of benchmarks, but expect updates from us in the near future.

What are these tests measuring?

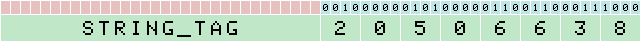

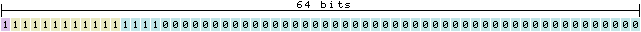

The answer to this question is difficult. Benchmarks like these are supposed to measure the execution speed of JavaScript, but they often end up measuring very unrealistic work loads. For example, the code below is the entirety of SunSpider’s bitwise-and.js test:

bitwiseAndValue = 4294967296;

for (var i = 0; i < 600000; i++)

bitwiseAndValue = bitwiseAndValue & i;

I'm not sure measuring the speed of this loop is very useful, but Firefox is the world champion at running it.

A bigger problem with many current benchmarks is that there's a temptation to cache results where possible. That can really pump up a score, but the likelihood of such a cache being useful in non-benchmark code is quite low. For instance, all engines now have a cache for eval strings (here's ours). This cache is occasionally useful in real world code: sites like digg.com and others were found to hit the cache. However, the cache results in a huge speedup on SunSpider, which overstates its importance.

Yet another problem we've had is that many benchmarks don't actually do anything, so they are very prone to mistakes. Google's V8 benchmark includes a test called "splay" which does some splay tree operations. However, since it isn't used in an actual program, the test has had several problems. First, we found that the test spent all of its time converting numbers to strings. Later, we found that the benchmark was adding and inserting nodes into the splay tree in an unrealistic pattern. To their credit, the V8 team has been relatively quick to fix these issues as we discover them. However, the root cause of these problems is that the benchmark doesn't run a real program.

Mozilla is also guilty of writing bad tests. Our Dromaeo test suite is full of little tiny loops and unrealistic workloads. The Dromaeo suite contained regex and string tests written in such a way that it made it easy to use a one-element cache for some tests. We had to fix that, but there are still lots of other similar issues.

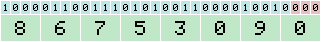

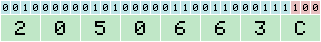

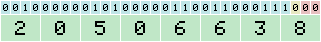

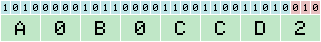

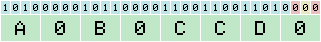

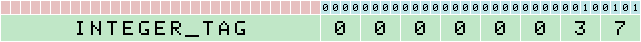

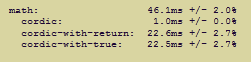

One last issue that can crop up has to do with over-specialization for a specific test. While I was running the SunSpider tests above, I noticed that IE9 got a score that was at least 10x faster than every other browser on SunSpider's math-cordic test. That would be an impressive result, but it doesn't seem to hold up in the presence of minor variations. I made a few variations on the test: one with an extra "true;" statement (diff), and one with a "return;" statement (diff). You can run those two tests along with the original math-cordic.js file here.

All three tests should return approximately the same timing results, so a result like the one pictured above would indicate a problem of some sort.

We're excited about the speed improvements we've already made for Firefox 4, and even more excited about those yet to come. We hope that all of the improvements will speed up code that our users run. And we'll keep hammering on those benchmarks.